Google has introduced AlphaEvolve, an AI-powered agent that is made to improve algorithms for various computing tasks, including math and hardware design. By leveraging large language models (LLMs) like Gemini and pairing them with automated evaluators, AlphaEvolve enhances the capabilities of traditional coding methods, providing an efficient way to optimise and innovate algorithms. Unlike typical AI models, AlphaEvolve operates within an evolutionary framework, continuously refining and improving the most promising ideas, resulting in faster, more accurate problem-solving.

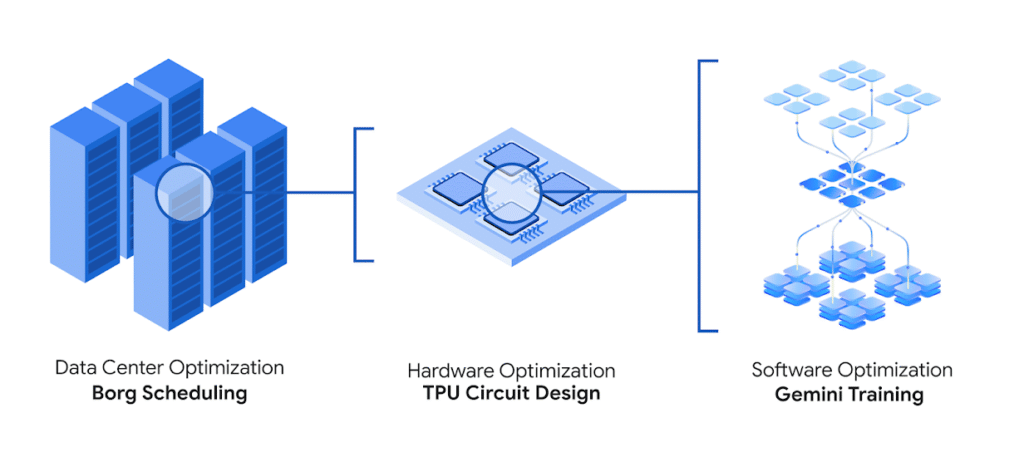

AlphaEvolve’s impact has already been seen across Google’s computing ecosystem, including data centers, chip design, and AI training. The model has helped streamline operations by optimising matrix multiplication algorithms, speeding up training processes for models like Gemini, and improving hardware design for Google’s custom AI accelerators. For example, AlphaEvolve proposed a key change to the Verilog code in a matrix multiplication circuit, helping to create a more efficient TPU, Google’s AI chip. This collaboration between AI and hardware engineers has proven to be a game-changer for Google’s computing infrastructure.

The model is a groundbreaking AI model that has significantly advanced algorithm development and optimisation, particularly in the areas of GPU instructions and mathematical research. The model can optimise low-level GPU instructions, achieving up to a 32.5% speedup for FlashAttention, a crucial component of Transformer-based AI models. This improvement leads to faster training times and reduced energy consumption, making AI research more efficient and cost-effective. By automating complex optimisations, AlphaEvolve allows researchers to focus on more innovative aspects of AI development.

In the realm of mathematics, AlphaEvolve has demonstrated its potential by designing new algorithms, such as a gradient-based optimisation procedure for matrix multiplication. This achievement not only advances mathematical research but also offers practical applications for improving computational efficiency in AI models. Compared to traditional models like GPT-4, AlphaEvolve excels in its ability to solve complex mathematical problems, showcasing its strength in specialised areas of research.

AlphaEvolve’s impact extends beyond algorithm development; it also improves the efficiency of data centres and hardware design. By integrating with leading models like Gemini Flash and Gemini Pro, AlphaEvolve supports the development of better AI infrastructure. Unlike previous models that mainly focused on text generation, AlphaEvolve’s ability to optimise hardware design makes it more comprehensive and beneficial for industries requiring AI-driven solutions for infrastructure improvement.Looking ahead, AlphaEvolve aims to continue refining its capabilities in algorithm development and optimisation, with a focus on making these advancements more accessible through its open-source approach. Google’s push to provide these innovations to the AI community promises to accelerate research and productivity across industries, particularly in areas such as data centre management and advanced hardware design. AlphaEvolve’s approach to reducing computational resources for large-scale projects positions it as a powerful tool for AI development in the future

📲 Get the latest Tech & Startup News on our WhatsApp Channel

👉 Join Now